Issue #162 - adding more senses to human body; MIT's robot Cheetah learned new tricks; a neural network coded using DNA; exoskeletons; and more!

View this email in your browser

This week - adding more senses to human body; MIT's robot Cheetah learned new tricks; a neural network coded using DNA; exoskeletons; and more!

MORE THAN A HUMAN

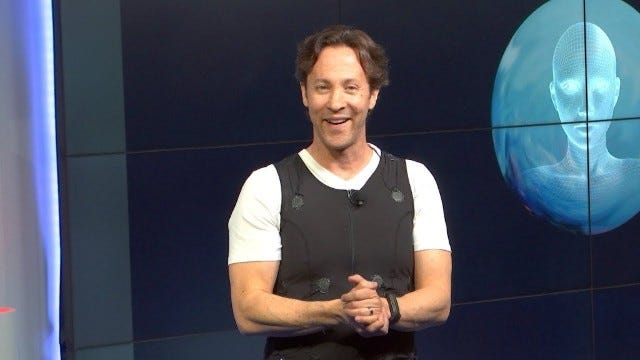

David Eagleman: "Can We Create New Senses for Humans?"

You may know David Eagleman from his work on The Brain series or books on neuroscience. In his free time, he builds devices such as a smartphone-controlled vest that translates sound into patterns of vibration for the deaf to extend human senses. Here is his talk at Google where he explains his work.

The 'super suit' that helps people move

Thanks to soft exoskeleton like the one described in this article, the elderly might toss away walking sticks or wheeled chairs and regain their mobility.

Exoskeleton that allows humans to work and play for longer

Here's a short trip to MIT's Biomechatronics Lab to check what kind of exoskeleton they are working on and how they plan to make you into a cyborg. You can find there an exoskeleton that will transform you into a perfect pianist or a one that will allow you to run faster and longer.

A prosthetic hand that feels

In this article, Stefan Schulz, CEO of Vincent Systems, explains how his new prosthetic hand works.

ARTIFICIAL INTELLIGENCE

Scientists Invented AI Made From DNA

Researchers from Caltech have created a neural network that can recognise handwritten number. "No big deal", someone might say. But, these guys build their network not using silicon chips. They coded their algorithm in DNA. It’s a novel implementation of a classic machine learning test that demonstrates how the very building blocks of life can be harnessed as a computer.

ROBOTICS

MIT’s Cheetah ‘bot walks up debris-littered stairs without visual sensors

Cheetah is a four-legged robot from MIT. This video presents all the new tricks the robot has learned. From a slow walk to a gallop. Walking outside and jumping on desks. The video also shows a test where the robot was "blinded" and asked to climb stairs. An impressive little robot that Cheetah.

Robots can learn a lot from nature if they want to ‘see’ the world

Making robot capable of seeing the world around them is a key step towards bringing the robots we have seen in science fiction to reality. Nature, as always, offers invaluable lessons on how to make efficient eyes and robotics researchers study insects and other animals to learn how to make robots see.

Self-driving cars are headed toward an AI roadblock

There’s growing concern among AI experts that it may be years, if not decades, before self-driving systems can reliably avoid accidents. As self-trained systems grapple with the chaos of the real world, experts are bracing for a painful recalibration in expectations, a correction sometimes called “AI winter.” That delay could have disastrous consequences for companies banking on self-driving technology, putting full autonomy out of reach for an entire generation.

Logical Fallacies - robot edition

"Robots should take over the world! Premise accepted, please state your arguments". Here's an infographic showing logical fallacies in robots trying to take over the world.

Thank you for subscribing,

Conrad Gray (@conradthegray)

If you have any questions or suggestions, just reply to this email or tweet at @hplusweekly. I'd like to hear what do you think about H+ Weekly.

Follow H+ Weekly!